Support’s notes from the field

Support at PlanetScale

The Support team at PlanetScale is a multi-cultural, multi-national team distributed around the globe, enabling us to support our users in almost any time zone on this planet. Internally, we are organized into two separate groups: Our Customer Engineering team working closely with our Enterprise customers and our Cloud Support team supporting our Base plan customers.

All of us have a technical background. Some of us develop software and contribute to FOSS software or have been building and maintaining infrastructure at scale for years. Others have worked on large support teams at companies such as GitHub, Google, and Microsoft.

Our combined collective experience in the industry racks up to a couple hundred years of knowledge. When you approach us with your database-centric problems, chances are we will be able to help swiftly resolve the issue or at least put you on the right track to get there yourself. And if it's something totally new, well, kudos to you! We are about to learn something new, and we'll be learning together.

If you want to get in touch with us, the easiest way is to open a ticket in our support portal or to start a new conversation in our public discussions board on GitHub. For our Enterprise customers, we also offer phone-based escalations and joint Slack channels where we can collaborate.

For a good overview about the different support plans, support targets, and service-level agreements (SLAs), please review our official documentation and our support portal.

In the rest of this article, we'll take a look at the most common places we see folks hit a wall.

Common issues users run into on PlanetScale

SSL/TLS certificate errors with PlanetScale

SSL/TLS certificate verification errors come in various forms, but what they all have in common is that they prevent you from connecting to your PlanetScale database, so it is immediately noticeable and a hard blocker for some. Most of the time, however, these errors are straightforward to resolve.

To understand what leads to these errors, it is good to have a basic understanding of how SSL/TLS certificates work.

How SSL/TLS certificates work

It all starts with a certificate authority (CA). A CA issues, signs, and stores SSL/TLS certificates. They exist so that a client can connect to a server securely and that network traffic between the two entities gets encrypted. You can set up your own CA and issue your own certificates, but for your certificates to be automatically verified in modern browsers, companies such as ours need to use a certificate that was issued by a common third-party CA.

PlanetScale's SSL/TLS certificate was issued by Let's Encrypt, a nonprofit CA run by the Internet Security Research Group (ISRG), which has issued certificates for more than 300 million websites to date.

When connecting to PlanetScale, your computer validates our SSL/TLS certificate by looking up the issuer of our certificate and comparing it against the trusted root certificate authorities store on your computer. When it finds a match, it checks if our certificate is valid and signed by the CA. If that turns out to be OK, it lets you connect securely.

Most modern operating systems have a root CA store, and your software usually knows where it is:

- On Mac OS X, the store is in

/etc/ssl/cert.pem - On Linux, it depends on the distribution, but it almost always is either in

/etc/sslor/etc/pki - On Windows, the root CA store is an internal database available via the CryptoAPI

Solving SSL/TLS errors

Most SSL/TLS errors that we see occur because of these trusted CA root certificates not being installed on the computer and, therefore, the software being unable to verify our certificate. On Linux, you can often solve this by installing the ca-certificates package. This package bundles the CA certificates from the Mozilla CA Certificate Program.

Most database drivers know where to find these locally installed CA certificates, but sometimes you may need to point the database driver to it. We have the most common paths for the root CA store listed in our documentation, along with a more thorough explanation of how SSL/TLS works.

Other SSL/TLS-related errors can occur due to libssl being too old, or other libraries in use which have not been compiled with SSL support or that simply do not offer any SSL/TLS support at all. If you're interested to learn more about such a scenario, we had an interesting case with Debian Buster in our public discussions board a few weeks ago.

In any case, things that you should NOT be doing:

- Disable SSL/TLS certificate verification

- Issue and sign your own SSL certificate

Disabling certificate verification will make your connection susceptible to man-in-the-middle attacks. This is a cyberattack where an attacker secretly relays and possibly alters the communication between two parties. In order for your communication with our servers to be secure, you must not disable it!

We also sometimes see users creating their own CA and SSL certificates and then sending us their certificates as well as the matching private key. You will never ever need to do that when using PlanetScale! It's an easy trap to fall into if you don't know enough about SSL/TLS and, from experience, it can get overwhelming and confusing quickly.

Whatever the nature of your SSL/TLS issue is, we will be happy to help get to the bottom of it if you open a ticket with us or if you open an issue in our discussions board.

Integrating third-party platforms

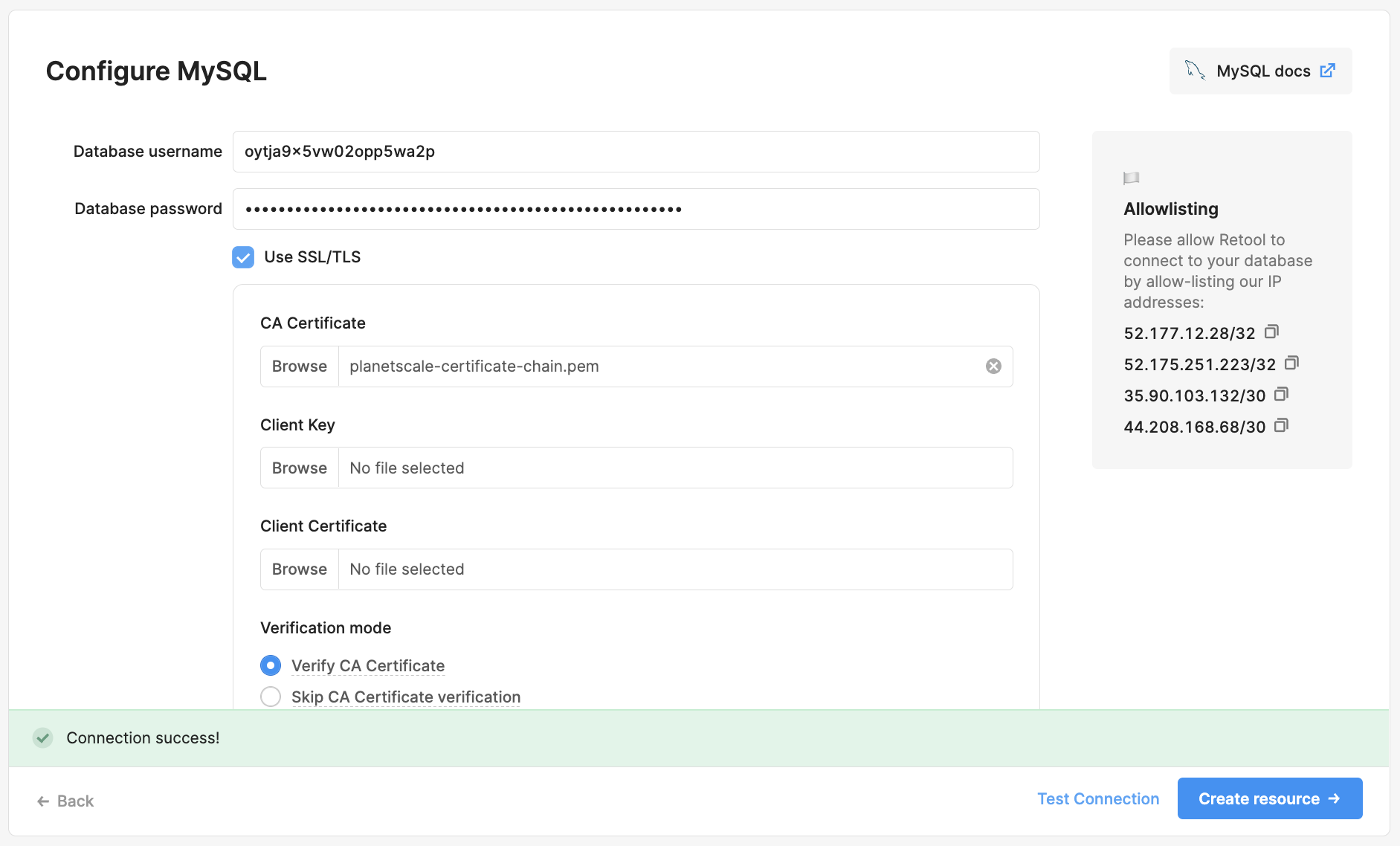

Sometimes, you need to integrate third-party platforms such as Google's Data Studio or Retool, but when your preferred platform does not support the common SSL/TLS certificate authorities' root certificates, you can run into trouble establishing a connection to your PlanetScale database.

When you enable SSL/TLS in your preferred tool, you sometimes stumble upon fields such as CA Cert, Client Key, or Client Cert, which normally only exist for the user to add their organization's self-signed SSL/TLS certificate. Remember, that's the case where you act as your own certificate authority and issue your own SSL/TLS certificates, which is not needed in the context of PlanetScale.

If you leave these fields blank and hope for the best, you normally encounter an error message such as this one:

Unable to get local issuer's certificate.

We can make use of these fields, though, to work around the issue. When there is a field where you can upload a CA Cert, you can upload Let's Encrypt's CA root certificate or, if it's a text field, paste it in. It is a regular text file after all.

The root certificates for Let's Encrypt can be downloaded from their website. It is called ISRG Root X1 and you will need to download it in .pem format.

For your convenience, below you will find Let's Encrypt's current root certificate:

-----BEGIN CERTIFICATE-----

MIIFazCCA1OgAwIBAgIRAIIQz7DSQONZRGPgu2OCiwAwDQYJKoZIhvcNAQELBQAw

TzELMAkGA1UEBhMCVVMxKTAnBgNVBAoTIEludGVybmV0IFNlY3VyaXR5IFJlc2Vh

cmNoIEdyb3VwMRUwEwYDVQQDEwxJU1JHIFJvb3QgWDEwHhcNMTUwNjA0MTEwNDM4

WhcNMzUwNjA0MTEwNDM4WjBPMQswCQYDVQQGEwJVUzEpMCcGA1UEChMgSW50ZXJu

ZXQgU2VjdXJpdHkgUmVzZWFyY2ggR3JvdXAxFTATBgNVBAMTDElTUkcgUm9vdCBY

MTCCAiIwDQYJKoZIhvcNAQEBBQADggIPADCCAgoCggIBAK3oJHP0FDfzm54rVygc

h77ct984kIxuPOZXoHj3dcKi/vVqbvYATyjb3miGbESTtrFj/RQSa78f0uoxmyF+

0TM8ukj13Xnfs7j/EvEhmkvBioZxaUpmZmyPfjxwv60pIgbz5MDmgK7iS4+3mX6U

A5/TR5d8mUgjU+g4rk8Kb4Mu0UlXjIB0ttov0DiNewNwIRt18jA8+o+u3dpjq+sW

T8KOEUt+zwvo/7V3LvSye0rgTBIlDHCNAymg4VMk7BPZ7hm/ELNKjD+Jo2FR3qyH

B5T0Y3HsLuJvW5iB4YlcNHlsdu87kGJ55tukmi8mxdAQ4Q7e2RCOFvu396j3x+UC

B5iPNgiV5+I3lg02dZ77DnKxHZu8A/lJBdiB3QW0KtZB6awBdpUKD9jf1b0SHzUv

KBds0pjBqAlkd25HN7rOrFleaJ1/ctaJxQZBKT5ZPt0m9STJEadao0xAH0ahmbWn

OlFuhjuefXKnEgV4We0+UXgVCwOPjdAvBbI+e0ocS3MFEvzG6uBQE3xDk3SzynTn

jh8BCNAw1FtxNrQHusEwMFxIt4I7mKZ9YIqioymCzLq9gwQbooMDQaHWBfEbwrbw

qHyGO0aoSCqI3Haadr8faqU9GY/rOPNk3sgrDQoo//fb4hVC1CLQJ13hef4Y53CI

rU7m2Ys6xt0nUW7/vGT1M0NPAgMBAAGjQjBAMA4GA1UdDwEB/wQEAwIBBjAPBgNV

HRMBAf8EBTADAQH/MB0GA1UdDgQWBBR5tFnme7bl5AFzgAiIyBpY9umbbjANBgkq

hkiG9w0BAQsFAAOCAgEAVR9YqbyyqFDQDLHYGmkgJykIrGF1XIpu+ILlaS/V9lZL

ubhzEFnTIZd+50xx+7LSYK05qAvqFyFWhfFQDlnrzuBZ6brJFe+GnY+EgPbk6ZGQ

3BebYhtF8GaV0nxvwuo77x/Py9auJ/GpsMiu/X1+mvoiBOv/2X/qkSsisRcOj/KK

NFtY2PwByVS5uCbMiogziUwthDyC3+6WVwW6LLv3xLfHTjuCvjHIInNzktHCgKQ5

ORAzI4JMPJ+GslWYHb4phowim57iaztXOoJwTdwJx4nLCgdNbOhdjsnvzqvHu7Ur

TkXWStAmzOVyyghqpZXjFaH3pO3JLF+l+/+sKAIuvtd7u+Nxe5AW0wdeRlN8NwdC

jNPElpzVmbUq4JUagEiuTDkHzsxHpFKVK7q4+63SM1N95R1NbdWhscdCb+ZAJzVc

oyi3B43njTOQ5yOf+1CceWxG1bQVs5ZufpsMljq4Ui0/1lvh+wjChP4kqKOJ2qxq

4RgqsahDYVvTH9w7jXbyLeiNdd8XM2w9U/t7y0Ff/9yi0GE44Za4rF2LN9d11TPA

mRGunUHBcnWEvgJBQl9nJEiU0Zsnvgc/ubhPgXRR4Xq37Z0j4r7g1SgEEzwxA57d

emyPxgcYxn/eR44/KJ4EBs+lVDR3veyJm+kXQ99b21/+jh5Xos1AnX5iItreGCc=

-----END CERTIFICATE-----

Please note that if we ever change our SSL provider or update aspects of our SSL/TLS certificate as part of its regular renewal, you would need to update the certificate you have uploaded as well. It's not something that happens often and we do not have plans to change it anytime soon, but if you see a third-party platform having trouble connecting after a few months, make sure to check if our SSL/TLS certificate has changed and update when necessary.

If you think, after having read through all that SSL/TLS-related information, there's got to be an easier way, then I have got something for you!

PlanetScale supports data integration engines such as Airbyte and Stitch. And for measuring usage and performance metrics, we also have a Datadog integration.

If you're having trouble connecting your preferred third-party tool, let us know either by creating a ticket or opening an issue in our discussions board, and we'll look into it together.

Data imports

When ramping up PlanetScale, you will eventually get to the point where you want to import your data, and sometimes, this turns out to be more complex than initially thought. Some features, such as stored procedures, are not supported. You can see the complete MySQL/Vitess compatibility list to be aware of in the documentation. Still, hardware can also be a limiting factor.

So that there is no misunderstanding: We take great pride in our ability to scale along with your business, and we will do our absolute best so that you will never have to worry about your database again, but sometimes all it takes to take a database cluster to its limits is a simple, but effective:

cat backup.sql | pscale shell

It is easy to quickly overwhelm your database with heavy IO operations if you're not careful. A huge influx in concurrent writes to your database will lead to a degraded service, and while our database clusters heal themselves automatically, it's obviously not a great first impression.

This is why we have built an importer.

Our importer uses MySQL's proven, reliable replication system under the hood. If you go through the import process, your PlanetScale database registers as a replica, making the data copying process trivial and giving you full control over when to make the cut-over.

You can point your application to your PlanetScale database once it's been registered as a replica. We will route any writes back to the primary until the point where you have elevated PlanetScale to become the primary itself. This gives you the ability to test performance and make any necessary updates before you fully switch over to us, and it is often overlooked.

One important caveat is that schema changes do not get replicated between databases in either direction. Please make sure not to execute any DDL (Data Definition Language) operations such as CREATE, DROP, ALTER or TRUNCATE while the import is ongoing.

Sometimes, we also see users having issues with their MySQL server's configuration. You will need to be able to change your MySQL server's gtid_mode, its binlog_format, as well as its expiration times (either expire_logs_days or binlog_expire_logs_seconds or both), otherwise you will not be able to use our importer.

If you cannot use our Importer, there are other tools you could use, such as mysqldump or MySQL Workbench. Another great option is aquarapid/go-mydumper which is based on the excellent gomydumper utility and which was adapted to work with Vitess by my colleague Jacques.

Whatever you end up choosing, if the tool gives you control over concurrency, a good ground rule is to start with a low concurrency rate and to be conservative with increases. Otherwise, depending on the size of your backup, you may end up in the situation described earlier in this section.

Last but not least, we sometimes also see our users wanting to start over with the import and then running into the following error returned by our connection test:

Error checking server configuration: found existing Vitess state on the external database

This happens because to be able to import your database, we create a temporary database named _vt on your current primary. We clean it up automatically when finishing up the import, but if you decide to start over, we cannot automatically detect that. In that case, you will need to drop the _vt database from the current primary first before you can reattempt the import.

Long story short, we know that importing large amounts of data can be daunting and it can be especially frustrating if there's a break somewhere in the process. If you're planning to import terabytes of data, chances are we are already working closely with you. In any other case, if you're running into trouble with your import, please open a ticket with us or open an issue in our discussions board, and we're happy to get involved.

Frequently hit timeouts

One last common issue you may run into is our configured hard timeouts, mainly our 20 seconds transaction and our 900 seconds query timeout. These are deliberately set timeouts that exist for performance reasons and to encourage good application design. We are looking into ways to lift or at least extend these, but for the time being, these need to be considered hard timeouts.

When you hit our 20 seconds transaction timeout, you will usually see the following error message:

vttablet: rpc error: code = Aborted desc = transaction <transaction>: in use: in use: for tx killer rollback (CallerID: planetscale-admin)

When you hit the 900 seconds query timeout instead, you will see an error message similar to this one:

target: example-db.-.primary: vttablet: rpc error: code = Canceled desc = (errno 2013) due to context deadline exceeded, elapsed time: 15m0.002989349s, killing query ID 65535 (CallerID: <id>)

These timeouts can be reached with complex transactions or queries, but most of the time, it's rather the user's application keeping the transaction open while handling other tasks such as data manipulation or sorting instead of closing the transaction first. Loops such as while <expression> or until <expression>, or for loops are particularly susceptible to that.

There is a workaround to lift our configured timeouts and that is to change the workload mode from OLTP (Online transactional processing) to OLAP (Online analytical processing).

We generally recommend against using it, though, as it can cause rather drastic side effects such as a workload consuming all available resources or blocking other important, short-lived queries or transactions from completing, or overloading a database up to a point where it goes into an unrecoverable state and where manual intervention is needed. It can also block planned failovers or critical updates and will make it easier to hit other intentional limits or timeouts dictated by MySQL.

Again, we do not recommend changing your database's workload mode. There is almost always a better solution.

However, if you still want to try this out, you can switch to OLAP by issuing a set workload='olap'; on a per-session basis, meaning you would have to directly execute it before running the affected transaction. The workload cannot be changed globally, and it will reset to OLTP after you have closed the session.

The best long-term solution still is to optimize your database's schema and your application's transactions and control structures to make its workloads fit into the 20 seconds time window. For simple workloads, consider using optimistic locking instead of transactions, and for more complex workloads, consider adopting Sagas. For large ETL workloads, we support data integration engines such as Airbyte and Stitch, with which you can offload these processes to other platforms that are more specialized in this field.

To help you with optimizing your queries and transactions, PlanetScale provides you with additional tools such as Insights. And, if you need a hand with any of this, please open a ticket with us or open an issue in our discussions board.