Patterns for Postgres Traffic Control

Josh Brown [@0ximjosh] |

Last week we introduced Database Traffic Control™. Traffic Control lets you attach resource budgets to slices of your Postgres traffic, like keeping your checkout flow running while a runaway analytics query gets shed instead. We have already discussed some scenarios where you should use Traffic Control, along with how to define resource limits, so now let's dig into what Traffic Control looks like in your codebase.

This post walks through some practical patterns in Go. Each pattern targets a different failure mode, architecture, or foot gun. Most of them layer on top of one another too, so you can adopt them individually or combine them for extra peace of mind. Keep in mind the general concepts here are applicable to whatever language your application is written in.

Setup

Most of the patterns here rely on custom tags attached to your queries. Traffic Control reads these using the SQLCommenter format: a SQL comment appended to each query with URL-encoded key=value pairs.

SELECT * FROM orders

WHERE user_id = $1

/*route='checkout',feature='new_order_flow'*/;

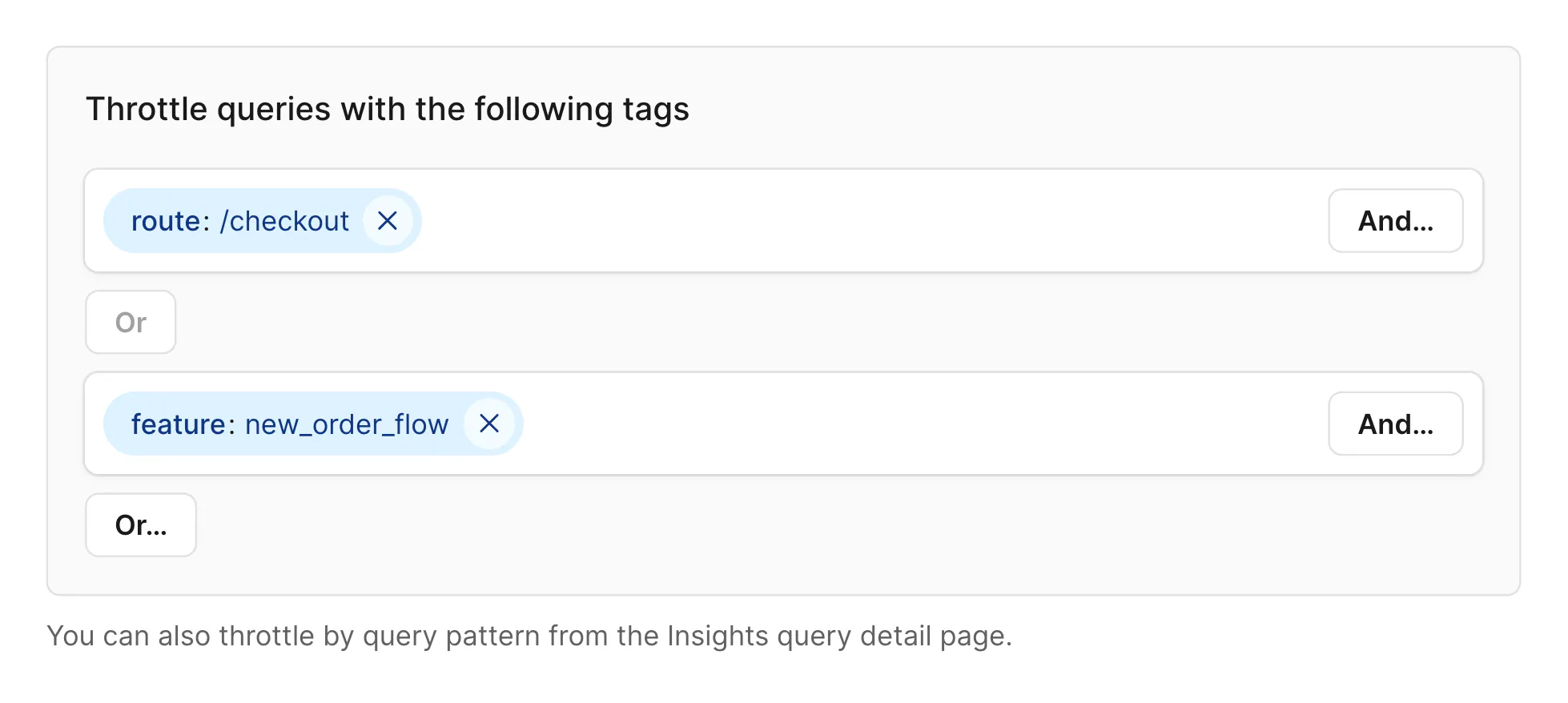

These tags are then available for new Traffic Control rules.

Here's a minimal Go helper that appends tags in this format:

import (

"fmt"

"net/url"

"sort"

"strings"

)

// appendTags appends SQLCommenter-format tags to a SQL query.

func appendTags(query string, tags map[string]string) string {

if len(tags) == 0 {

return query

}

parts := make([]string, 0, len(tags))

for k, v := range tags {

parts = append(parts, fmt.Sprintf("%s='%s'", k, url.QueryEscape(v)))

}

sort.Strings(parts) // deterministic order

return query + " /*" + strings.Join(parts, ",") + "*/"

}

You'll also want a way to thread tags through your call stack without touching every function signature. A context key works well for this:

type contextKey string

const sqlTagsKey contextKey = "sql_tags"

func tagsFromContext(ctx context.Context) map[string]string {

if tags, ok := ctx.Value(sqlTagsKey).(map[string]string); ok {

// return a copy so callers can't mutate shared state

out := make(map[string]string, len(tags))

for k, v := range tags {

out[k] = v

}

return out

}

return make(map[string]string)

}

func contextWithTags(ctx context.Context, tags map[string]string) context.Context {

return context.WithValue(ctx, sqlTagsKey, tags)

}

With these two helpers in place, the patterns below mostly just set keys and values in context. Tagging happens automatically when the query executes.

Per-service isolation via Roles

In a microservice architecture, a single misbehaving service should not be able to degrade every other service sharing the same database. The simplest way to isolate a service is to create a Traffic Control rule based on a unique connection string for the given service, or via application name.

A budget on username='pscale_api_123abc' will isolate all traffic from that role. This also helps in incident response: you can immediately cap a service's resource share without redeploying anything.

Note that the username is the internal Postgres username of the role, not the dashboard role name. You can also target custom roles created by CREATE ROLE if your microservices have strict security over table permissions.

You can also use the application_name by appending it to your connection strings such as postgresql://other@localhost/otherdb?application_name=myapp.

Route-level tagging in an HTTP service

When you're running a monolith or a large API service, the problem isn't usually the whole service, it's specific routes. The /api/export endpoint that generates CSV reports should not be able to kill the /api/checkout flow.

An HTTP middleware can inject the route into context at runtime before any handler runs:

// Any route using SQLTagMiddleware will have the pattern injected into its context

// dynamically at runtime

func SQLTagMiddleware(next http.Handler) http.Handler {

return http.HandlerFunc(func(w http.ResponseWriter, r *http.Request) {

tags := tagsFromContext(r.Context())

route := strings.ReplaceAll(strings.ReplaceAll(r.Pattern, "{", ":"), "}", ":") // Removes "{}" characters from route

tags["route"] = route

tags["app"] = "web"

ctx := contextWithTags(r.Context(), tags)

next.ServeHTTP(w, r.WithContext(ctx))

})

}

Wrap your database calls to pick up the tags automatically:

// QueryContext for SELECT statements

func (db *DB) QueryContext(ctx context.Context, query string, args ...any) (*sql.Rows, error) {

return db.sql.QueryContext(ctx, appendTags(query, tagsFromContext(ctx)), args...)

}

// ExecContext for INSERT/UPDATE statements

func (db *DB) ExecContext(ctx context.Context, query string, args ...any) (sql.Result, error) {

return db.sql.ExecContext(ctx, appendTags(query, tagsFromContext(ctx)), args...)

}

Now every query carries the route it came from. You can create a Traffic Control budget targeting route='/api-export' and give it a conservative CPU limit.

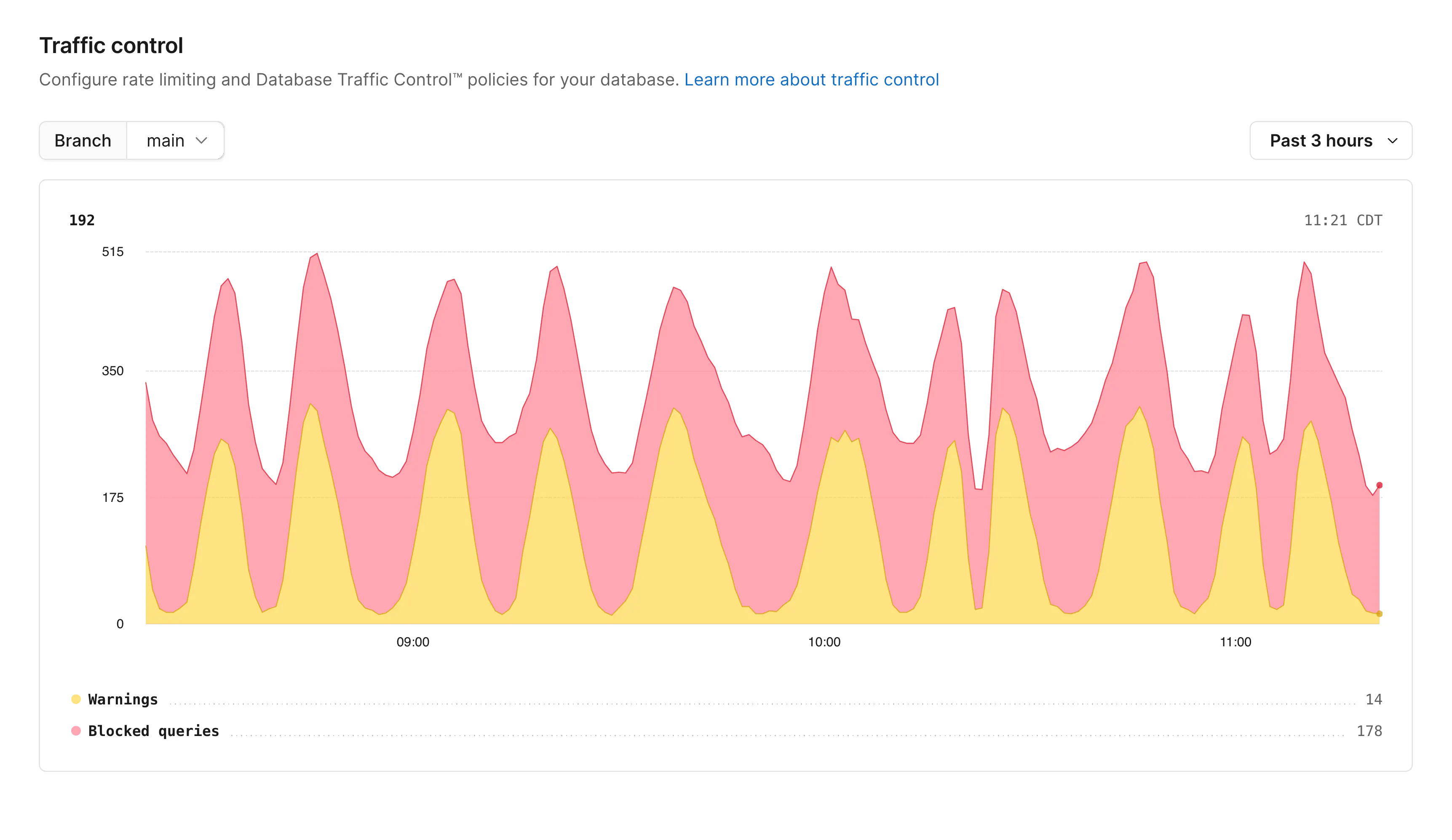

This also makes it easy to set up broad budgets during incidents. If you suddenly see a spike and don't know which route is responsible, the violation graph in Traffic Control will show you exactly which route tag is hitting limits.

Feature flags and new deployments

Shipping a new feature to production always carries risk. Maybe the new query pattern is fine under your test load but becomes expensive at scale. Traffic Control gives you a way to cap the blast radius before it becomes an incident.

The simplest version sets a tag from an environment variable at startup:

var deploymentTag = os.Getenv("DEPLOYMENT_TAG") // e.g. "new_checkout_v2" or git sha "96e350426"

func tagWithDeployment(ctx context.Context) context.Context {

if deploymentTag == "" {

return ctx

}

tags := tagsFromContext(ctx)

tags["feature"] = deploymentTag

return contextWithTags(ctx, tags)

}

Set DEPLOYMENT_TAG=new_checkout_v2 when rolling out new pods and leave it unset on the old pods. Traffic Control can then have a budget on feature='new_checkout_v2' in Warn mode from day one, so you see exactly how the new code behaves before it causes problems. When you're confident, either remove the budget or switch it to Enforce as a safety net.

For feature flags controlled at runtime, the same approach works but driven by your flag evaluation:

func (h *OrderHandler) ServeHTTP(w http.ResponseWriter, r *http.Request) {

ctx := r.Context()

if flags.Enabled(ctx, "new_order_flow") {

tags := tagsFromContext(ctx)

tags["feature"] = "new_order_flow"

ctx = contextWithTags(ctx, tags)

}

h.processOrder(ctx, w, r)

}

Tier-based limits in multi-tenant apps

In a SaaS application, free-tier users should not be able to degrade the experience for enterprise customers. Traffic Control lets you enforce this at the database level rather than just at the application layer.

Inject the user's subscription tier into the SQL tags early in your request handling — ideally right after you've resolved the authenticated user:

type Tier string

const (

TierFree Tier = "FREE"

TierPro Tier = "PRO"

TierEnterprise Tier = "ENTERPRISE"

)

func WithUserTier(ctx context.Context, tier Tier) context.Context {

tags := tagsFromContext(ctx)

tags["tier"] = string(tier)

return contextWithTags(ctx, tags)

}

In your authentication middleware:

func AuthMiddleware(users *UserService, next http.Handler) http.Handler {

return http.HandlerFunc(func(w http.ResponseWriter, r *http.Request) {

user, err := users.Authenticate(r)

if err != nil {

http.Error(w, "unauthorized", http.StatusUnauthorized)

return

}

ctx := WithUserTier(r.Context(), user.Tier)

next.ServeHTTP(w, r.WithContext(ctx))

})

}

With this in place, create two Traffic Control budgets:

tier='free'— conservative limits on server share and max concurrent queriestier='pro'— moderate limits

Leave enterprise traffic unbudgeted or give it a high budget as a ceiling. When a free-tier user runs an expensive dashboard or triggers a slow query, the budget sheds that traffic before it touches enterprise workloads.

You can combine this with the route tag from Pattern 2. A budget matching tier='free' AND route='api-export' can be stricter than a budget on tier='free' alone. Enterprise export requests get more headroom than free-tier export requests.

Background jobs and scripts

Background jobs are a common cause of database incidents. A migration script, a nightly sync, or a one-off data backfill can all accidentally saturate your database if they run faster than expected. Traffic Control is a clean way to give these jobs a resource ceiling without having to tune query-level timeouts throughout your codebase.

For long-running background workers, use a dedicated connection pool with a distinct application_name:

func newJobDB(dsn string) (*sql.DB, error) {

jobDSN, err := url.Parse(dsn) // your connection string

if err != nil {

return nil, err

}

q := jobDSN.Query()

// This sets the application name in code instead of in the connection string env variable.

q.Set("application_name", "background-jobs")

jobDSN.RawQuery = q.Encode()

db, err := sql.Open("pgx", jobDSN.String()) // connects to Postgres

if err != nil {

return nil, err

}

db.SetMaxOpenConns(4) // Jobs don't need high concurrency

return db, nil

}

newJobDB takes the DSN of your database and sets application_name to background-jobs before connecting. Once connected we set the max connections to 4 to make sure our background job isn't taking up more workers than it should, and finally we return it so that the calling function can now query the database.

Setting application_name on the connection string level in code ensures that it is always set for this service, no matter the query or connection string given. You can pair this with SQL comments as described above for even more fine-grained control and insights into your queries.

For one-off scripts and migrations we can do something similar. Here we encode the script's identity directly in the connection string so it shows up clearly in Traffic Control and Insights:

// Returns a database instance with the `application_name` set to `script-[scriptName]`

// for use in scripts

func scriptDB(dsn, scriptName string) (*sql.DB, error) {

u, _ := url.Parse(dsn)

q := u.Query()

q.Set("application_name", "script-"+scriptName) // e.g. "script-backfill-order-totals"

u.RawQuery = q.Encode()

return sql.Open("pgx", u.String())

}

Create a Traffic Control budget for application_name='background-jobs' in Warn mode before you run this job next. Observe how much of the database's resources your background work typically consumes. Then switch to Enforce to cap it at a level where it can't crowd out interactive traffic even if a job goes sideways.

Handling blocked queries

When Traffic Control is in Enforce mode and a query exceeds its budget, Postgres returns SQLSTATE 53000 with an error message prefixed with [PGINSIGHTS] Traffic Control:. Your application needs to handle this without crashing.

With pgx/v5:

import (

"errors"

"github.com/jackc/pgx/v5/pgconn"

)

const sqlstateTrafficControl = "53000"

func isTrafficControlError(err error) bool {

var pgErr *pgconn.PgError

return errors.As(err, &pgErr) && pgErr.Code == sqlstateTrafficControl

}

The right response depends on the query's role in your application:

func (s *OrderService) GetUserOrders(ctx context.Context, userID int64) ([]Order, error) {

rows, err := s.db.QueryContext(ctx, `SELECT id, total FROM orders WHERE user_id = $1`, userID)

if err != nil {

if isTrafficControlError(err) {

// Return a degraded response rather than a 500

return nil, ErrServiceUnavailable

}

return nil, err

}

defer rows.Close()

// ...

}

For non-critical workloads like analytics or reporting, returning a 503 Service Unavailable or a cached result is most likely the right behavior. That's exactly the controlled failure mode Traffic Control is designed to create. For more critical paths, you may want a short retry with backoff:

func queryWithBackoff(ctx context.Context, db *sql.DB, query string, args ...any) (*sql.Rows, error) {

const maxRetries = 3

backoff := 100 * time.Millisecond

for attempt := range maxRetries {

rows, err := db.QueryContext(ctx, query, args...)

if err == nil {

return rows, nil

}

if !isTrafficControlError(err) || attempt == maxRetries-1 {

return nil, err

}

select {

case <-time.After(backoff):

backoff *= 2

case <-ctx.Done():

return nil, ctx.Err()

}

}

return nil, errors.New("overloaded")

}

Observing warn-mode notices

Before switching a budget to Enforce, you'll run it in Warn mode. In Warn mode, queries succeed but the driver receives a Postgres notice containing [PGINSIGHTS] Traffic Control:. With pgx/v5 you can log these notices to build an accurate picture of what would be blocked:

import "github.com/jackc/pgx/v5"

config, err := pgx.ParseConfig(dsn)

if err != nil {

log.Fatal(err)

}

config.OnNotice = func(c *pgconn.PgConn, notice *pgconn.Notice) {

if strings.Contains(notice.Message, "[PGINSIGHTS] Traffic Control:") {

log.Printf("traffic control warning: %s", notice.Message)

// Increment a metric, write to a structured log, etc.

}

}

Collect these logs for a few hours of representative traffic before switching to Enforce. The pattern of which rules fire and how often tells you whether your limits need adjustment.

Putting it together

These patterns compose. A real application might layer several of them:

func (s *Server) setupMiddleware() http.Handler {

mux := http.NewServeMux()

// register routes...

var handler http.Handler = mux

handler = SQLTagMiddleware(handler) // Pattern 2: route tags

handler = AuthMiddleware(s.users, handler) // Pattern 4: tier tags

return handler

}

// At startup, the job worker uses Pattern 5: Background jobs

jobDB, _ := newJobDB(dsn)

// New features use Pattern 3:

// DEPLOYMENT_TAG=new_checkout_v2 set in the deployment manifest

Traffic Control sees all of this as a combination of tags. A budget on tier='free' covers all free-tier traffic regardless of route. A budget on route='api-export' AND tier='free' covers a specific combination. Multiple matching budgets all apply simultaneously and queries must satisfy every budget they match. You can build layered policies without complicated rule logic.

Start in Warn mode, observe which budgets would fire during normal load, tighten the limits until only pathological cases trigger violations, then switch to Enforce. The getting started guide walks through this rollout process in detail.

The difference between a database outage and a degraded experience often comes down to whether you've decided in advance which traffic to shed. Traffic Control makes that decision explicit and configurable instead of leaving it to whichever query happens to win a resource race.